Today Kubernetes has become the defacto standard to deploy applications. To understand what happens behind the scenes when you fire "kubectl" commands, please have a look at this excellent tutorial series by VMWare - https://kube.academy/courses/the-kubernetes-machine

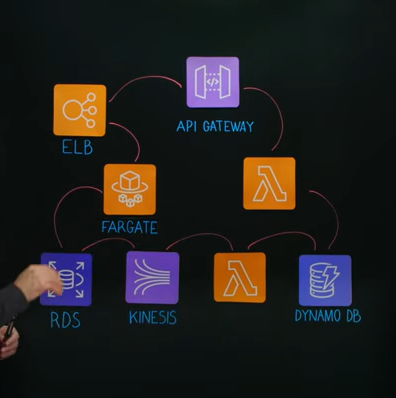

Some key components of the K8 ecosystem. The control plane consists of the API server, Scheduler, etcd and Controller Manager.

- kubectl: This is a command line tool that sends HTTP API requests to the K8 API server. The config parameters in your YAML file are actually converted to JSON and a POST request is made to the K8 control plane (API server).

- etcd: etcd (pronounced et-see-dee) is an open source, distributed, consistent key-value store for shared configuration, service discovery, and scheduler coordination of distributed systems or machine clusters. Kubernetes stores all of its data in etcd, including configuration data, state, and metadata. Because Kubernetes is a distributed system, it requires a distributed data store such as etcd. etcd allows every node in the Kubernetes cluster to read and write data.

- Scheduler: The kube-scheduler is the Kubernetes controller responsible for assigning pods to nodes in the cluster. We can give hints in the config for affinity/priority, but it is the Scheduler that decides where to create the pod based on memory/cpu requirements and other config params.

- Controller Manager: A collection of 30+ different controllers - e.g. deployment controller, namespace controller, etc. A controller is a non-terminating control loop (daemon that runs forever) that regulates the state of the system - i.e. move the "existing state" to the "desired state" - e.g. creating/expanding a replica set for a pod template.

- Cloud Controller Manager: A K8 cluster has to run on some public/private cloud and hence has to integrate with the respective cloud APIs - to configure underlying storage/compute/network. The Cloud Controller Manager makes API calls to the Cloud Provider to provision these resources - e.g. configuring persistent storage/volume for your pods.

- kubelet: The kubelet is the "node agent" that runs on each node. It registers the node with the apiserver. it provides an interface between the Kubernetes control plane and the container runtime on each node in the cluster. After a successful registration, the primary role of kubelet is to create pods and listen to the API server for instructions.

- kube-proxy: The Kubernetes network proxy (aka kube-proxy) is a daemon running on each node. It monitors the changes of service and endpoint in the API server, and configures load balancing for the service through iptables. Kubernetes gives pods their own IP addresses and a single DNS name for a set of Pods, and can load-balance across them